AUO Corporation Teaches Old Robots New Tricks

Following the success of the inaugural Intel® DevCup, the competition returned in 2022 to illustrate Taiwanese ingenuity in AI development. Provided with a selection of hardware supporting the Intel® Distribution of OpenVINO™ Toolkit, a total of 258 teams vied for a prize fund of more than NT$1 million.

AAEON’s new UP Xtreme i12 Edge was selected by two of the participating teams, with both reaching the finals. In this two-part series, we will explore each team’s objectives, ingenuity, and the broader impact of their solution. The first application, produced by the Smart Automation team from AUO Corporation, won the Best Work and Popularity awards.

The Aim

The Smart Automation team’s objective was to develop a solution to autonomously detect and self-correct errors and prevent occupational safety risks that affect older automated industrial robots. To achieve this, the team sought to provide the robot with decision-making capabilities, including the ability to return control to the user, based on audiovisual data and AI inferencing. This was characterized by the team as giving the robot the means to ‘see, hear, make decisions, and return control’.

The main barrier to implementing such functions in older automated robots is that AI integration typically requires the replacement of antiquated machinery in its entirety, which has the consequences of interrupting the factory’s production. In addition to this, there is often hesitancy due to the additional funds needed to deploy and maintain more sophisticated assembly line machinery.

Application Architecture

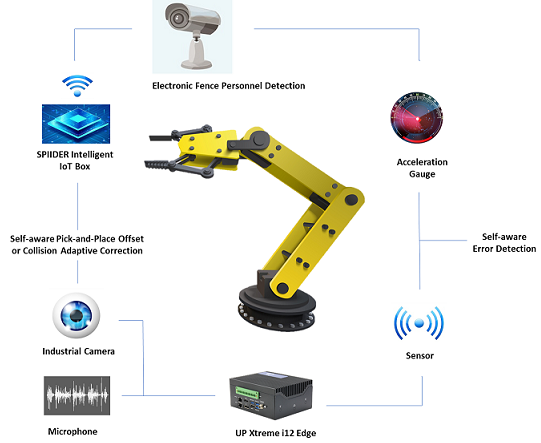

The architecture of the application relied on the collection and analysis of both visual and audio data using the UP Xtreme i12 Edge, powered by 12th Generation Intel® Core™ i7/i5/i3/Celeron® Processors, as an AI Edge system, along with AUO Corporation’s self-developed SPIIDER intelligent IoT box. The UP Xtreme i12 Edge’s microphone was utilized for audio frequency retrieval, while its 2.5GbE LAN port supported an industrial camera, which was also connected to AUO Corporation's self-developed SPIIDER intelligent IoT box to facilitate visual data collection and data return control.

Giving the robot the ability to ‘hear’, the team employed a two-pronged approach. The first of these was the development of a denoising model to remove environmental background noise to facilitate accurate sound detection. Audio inferencing models were then used by the UP Xtreme i12 Edge to distinguish between the sound of the robot’s regular state and abnormal sounds that could indicate machine errors.

Both audio and visual data were synthesized to produce an overall risk assessment of the situation. The risk assessment enabled the robot to determine whether to self-correct or cease operation and return the decision-making process back to the user via a human-machine interface.

Impact

The team successfully integrated edge AI with existing industrial robotic infrastructure, achieving an adaptive correction accuracy of < 0.5mm. Consequently, automated pick-and-place processes that rely on precision were made safer and more efficient. With the dual outcome of making industrial workspaces safer for workers through its electronic fence personnel detection and the ability to detect and self-correct errors, the application showed that AI integration is possible with older automated robots, without the aforementioned time and financial constraints.

Achieving such a feat opens the door to making AI much more accessible to manufacturers that do not have the resources to replace their assembly line infrastructure with entirely new machinery, but wish to benefit from AI. Should the adoption of this solution become more widespread, it could have a substantial impact by making industrial AI solutions more modular, accessible, and cost-effective for businesses. This would consequently increase the longevity of older automated robots while providing a safer working environment for factory personnel.

UP XTREME I12 EDGE

UP Xtreme i12/13 system with 12th/13th Generation Intel® Core™ i7/i5/i3/Celeron® Processor SoC

MoreUP XTREME I12

UP Xtreme i12 Developer Board with 12th/13th Generation Intel® Core™ i7/i5/i3/Celeron® Processor SoC

More